But my resultant accuracy was slightly less, probably because of the reduced batch size.

Just for fun, I blindly copied those model parameters, and trained the model in the second IPython template. There is Google's model called "Onsets & Frames" with very good accuracy, see the following blog post: The previous full Tensorflow model (not TensorFlow Lite) is used in my app for Windows 7 or later, to see it click on the following screenshot: To see my app for Android 4.4 KitKat (API level 19) or higher, click on the following screenshot: This model is super-fast on my Android device and accuracy is still not bad. There is an example of using the model in my fifth IPython template ("5 TF Lite Inference.ipynb"). It takes as input approximately 1 second of raw audio (not 20 seconds of mel spectrogram). Or look for the link in the GitHub-repository: There is another pre-trained Magenta's model in TensorFlow Lite format, it can be downloaded here:

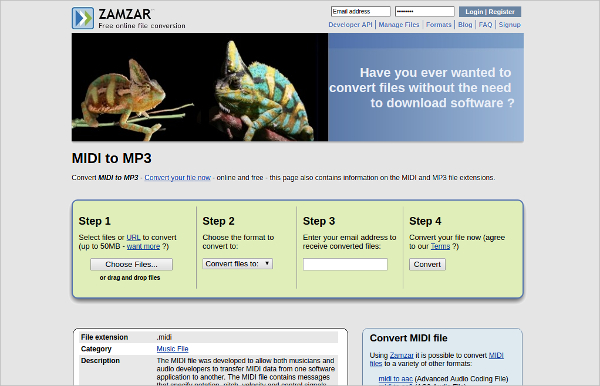

The accuracy will depend on the complexity of the song, and will obviously be higher for solo piano pieces. IPython-notebook templates for neural network training and then using it to generate piano MIDI-files from audio (MP3, WAV, etc.). Automatic Polyphonic Piano Transcription with Recurrent Neural Networks

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed